Abstract: 由于区域标靶在图像内显示区域很小,不能直接获得检测结果,所以采用了另外一种方式来进行检测。

[算法]小区域角点检测

–>最终效果

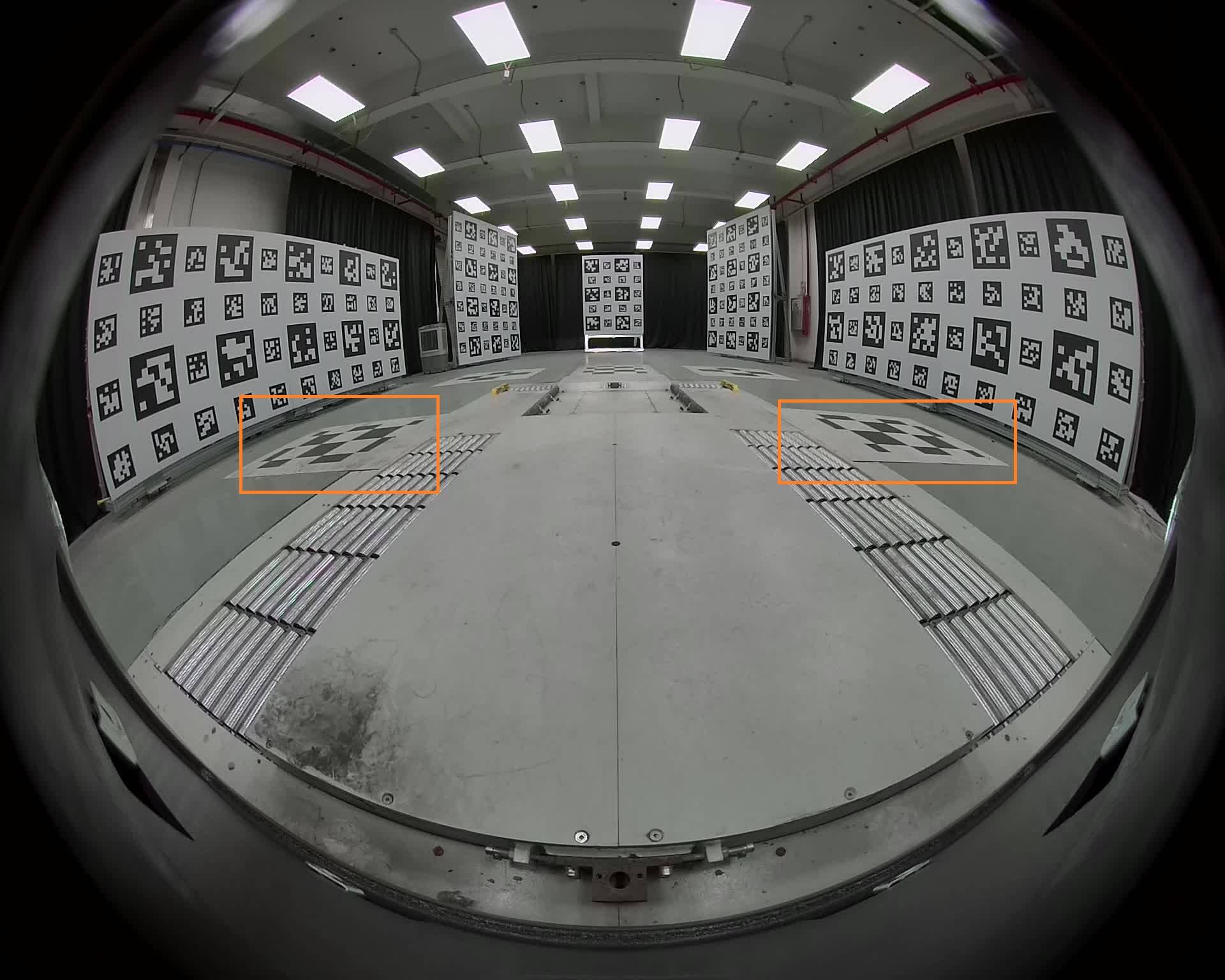

输入图片:

输出图片(中间两边的地面标靶):

算法流程

- 检测Apriltag标靶,并solvepnp,获取初始参数

- 用初始外参做单路相机的鸟瞰图BEV

- 在BEV里确定ROI

- 将ROI区域进行放大(太小了,检测不到)

- 对放大后的ROI(ROI_resized)进行findchessboard检测

- 角点坐标转换

- 得到ROI_resized上的角点coners_resized后,转换到原ROI上coners_ROI

- 将coners_ROI转换到BEV图像上—coners_BEV

- 将coners_BEV转换到原图的像素坐标上,得到coners,完成

具体执行

跳过第一步Apriltag的检测与初始外参的获取,不是重点。

2.做单路相机的鸟瞰图

opencv有很多开源的函数可以直接调用,比如cv2.getPerspectiveTransform与cv2.warpPerspective等,这里不贴代码,闭源不可分享。效果是一样的。前视鸟瞰图如下:

3.BEV的ROI确定

原来圈起来的地方通过投影变成的地面规则图案。这里直接人工确定大致的范围,一个矩形;

opencv里矩形有特定的数据类型是cv::Rect rect。

主要包含四个参数x,y,width,height。x和y是矩阵左上角的坐标,width和height就是宽高。这是我设定的两个ROI,因为左右都要检测。

cv::Rect rect_left(448, 243, 120, 90); // 左roi

cv::Rect rect_right(725, 247, 95, 90); // 右roi

对图片进行ROI的裁剪,opencv可以直接使用:

cv::Mat SingleChartImage_full = cam->image; //原图

cvtColor(SingleChartImage_full, SingleChartImage_full_gray, COLOR_BGR2GRAY); //转灰度图

bev_gray = computeSingleCameraBEV(SingleChartImage_full_gray, rvec, tvec, ocam.K, ocam.D, ocam.xi, id, CarBevInfo.bev_size, CarBevInfo.bev_range, CarBevInfo.car_axis, CarBevInfo.car_height, CarBevInfo.car_width, 180.0, 110.0, filename); //bev投影

cv::Rect rect_left(448, 243, 120, 90); // 左roi

cv::Rect rect_right(725, 247, 95, 90); // 右roi

SingleChartImage_roi_gray = bev_gray(rect_left).clone(); //ROI裁剪

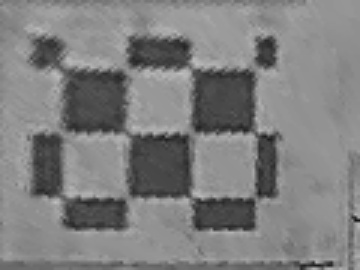

4.ROI的放大

等比例放大就比较简单了:

rect_scale = 3.0;

cv::resize(SingleChartImage_roi_gray, SingleChartImage_roi_gray, cv::Size(), rect_scale, rect_scale);

cv::imwrite("../debug/0_resized_roi_" + name + ".jpg", SingleChartImage_roi_gray);

5.findchessboard检测

这个详细的话见 [图像]二值化检测角点

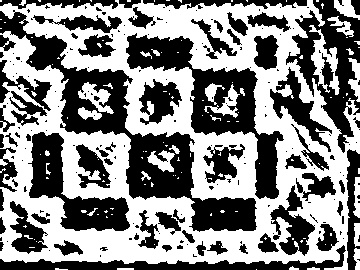

5.1 二值化

贴一下代码:

cv::Mat binary;

double scale = 0.2; // 可调整

int constValue = 0; // 可调整

int blockSize = cvRound(MIN(SingleChartImage_roi_gray.cols, SingleChartImage_roi_gray.rows) * scale) | 1;

blockSize = std::max(3, std::min(101, blockSize)); // 限制范围[3, 101]

cv::Scalar mean = cv::mean(SingleChartImage_roi_gray);

if (mean[0] < 100) constValue = 5; // 暗图,降低阈值

if (mean[0] > 150) constValue = -5; // 亮图,提高阈值

cv::adaptiveThreshold(SingleChartImage_roi_gray, binary, 255, cv::ADAPTIVE_THRESH_GAUSSIAN_C, cv::THRESH_BINARY, blockSize, constValue);

cv::imwrite("../debug/" + std::to_string(id) + "_preprocessed_binary_" + name + ".jpg", binary);

左二值化结果:

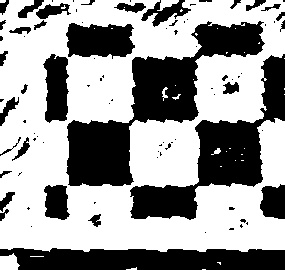

5.2 角点检测

found = findChessboardCorners(binary, cv::Size2f(tag.width, tag.height), corners, CALIB_CB_ADAPTIVE_THRESH + CALIB_CB_NORMALIZE_IMAGE);

检测结果存在corners里,不过这是在resize过的roi上,而且比较粗糙,进行亚像素精确化:

find4QuadCornerSubpix(SingleChartImage_roi_gray, corners, Size(5, 5));

cv::Mat roi_with_corners = SingleChartImage_roi_gray.clone();

if (roi_with_corners.channels() == 1) {

cv::cvtColor(roi_with_corners, roi_with_corners, cv::COLOR_GRAY2BGR);

}

for (size_t j = 0; j < corners.size(); ++j) {

cv::circle(roi_with_corners, Point(corners[j].x, corners[j].y), 2, Scalar(0, 0, 255), 1, 1);

cv::putText(roi_with_corners, std::to_string(j), Point(corners[j].x + 5, corners[j].y - 5), cv::FONT_HERSHEY_SIMPLEX, 0.4, Scalar(0, 255, 0), 1);

}

cv::imwrite("../debug/" + std::to_string(id) + "_roi_corners_debug_" + name + ".jpg", roi_with_corners);

std::cout << "已保存带前视角点标记的ROI图像" << std::endl;

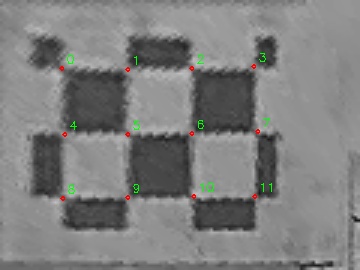

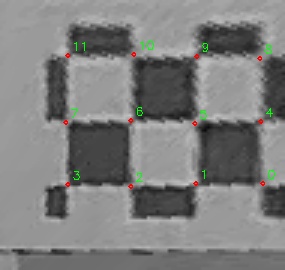

结果如下:

6. 角点坐标转换

6.1 转换到原ROI

成倍做的resize,成倍回去:

std::vector<cv::Point2f> corners_original;

for (size_t j = 0; j < corners.size(); ++j) {

corners_original.push_back(corners[j] / rect_scale);

}

6.2 转换到BEV

上一步有在原ROI上的坐标了,根据ROI的区域加上x和y即可:

std::vector<cv::Point2f> corners_original;

std::vector<cv::Point2f> pt_in_original_vector;

for (size_t j = 0; j < corners.size(); ++j) {

corners_original.push_back(corners[j] / rect_scale);

pt_in_original_vector.push_back(corners[j] / rect_scale + cv::Point2f(rect_front.x, rect_front.y));

}

// 画图

for (size_t j = 0; j < pt_in_original_vector.size(); ++j) {

cv::circle(original_with_corners, pt_in_original_vector[j], 2, Scalar(0, 0, 255), 1, 1);

cv::putText(original_with_corners, std::to_string(j), Point(pt_in_original_vector[j].x + 5, pt_in_original_vector[j].y - 5), cv::FONT_HERSHEY_SIMPLEX, 0.4, Scalar(0, 255, 0), 1);

}

cv::imwrite("../debug/" + std::to_string(id) + "_original_corners_" + name + ".jpg", original_with_corners);

6.3 转换到原图

vector<cv::Point2f> original_pixel_vector;

for (size_t j = 0; j < pt_in_original_vector.size(); ++j) {

cv::Point2f bev_point = pt_in_original_vector[j];

cv::Point2f original_pixel = findOriginalImagePixel(bev_point.y, bev_point.x, id, CarBevInfo.bev_size, CarBevInfo.bev_range, rvec, tvec, ocam.K, ocam.D, ocam.xi, model->lidar_flag);

if (original_pixel.x >= 0 && original_pixel.x < original_with_all_points.cols && original_pixel.y >= 0 && original_pixel.y < original_with_all_points.rows) {

cv::circle(original_with_all_points, original_pixel, 3, cv::Scalar(0, 0, 255), -1);

original_pixel_vector.push_back(original_pixel);

}

}

cv::imwrite("../debug/" + std::to_string(id) + "_original_with_all_corners_" + name + ".jpg", original_with_all_points);

corners = original_pixel_vector;

findOriginalImagePixel这个函数闭源,不过很好写,根据生成BEV的逻辑反着写就行。两个标靶画到同一张图就如下:

后续,获得这些原图的像素点,经过去畸变就可以与3d点计算solvepnp,获得更加准确的参数。

Last modified on 2026-01-19